Documentation - Apache Spark

Apache Spark™ Documentation Setup instructions, programming guides, and other documentation are available for each stable version of Spark below: Spark Spark 4.1.0

PySpark Overview — PySpark 4.1.0 documentation - Apache Spark

Dec 11, 2025 · PySpark combines Python’s learnability and ease of use with the power of Apache Spark to enable processing and analysis of data at any size for everyone familiar with Python. …

Getting Started — PySpark 4.1.0 documentation - Apache Spark

There are more guides shared with other languages such as Quick Start in Programming Guides at the Spark documentation. There are live notebooks where you can try PySpark out without …

Structured Streaming Programming Guide - Spark 4.1.0 …

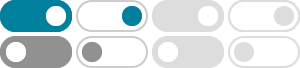

Types of time windows Spark supports three types of time windows: tumbling (fixed), sliding and session. Tumbling windows are a series of fixed-sized, non-overlapping and contiguous time …

Spark 3.5.5 released - Apache Spark

Spark 3.5.5 released We are happy to announce the availability of Spark 3.5.5! Visit the release notes to read about the new features, or download the release today. Spark News Archive

Performance Tuning - Spark 4.1.0 Documentation

Apache Spark’s ability to choose the best execution plan among many possible options is determined in part by its estimates of how many rows will be output by every node in the …

Spark Streaming - Spark 4.1.0 Documentation

Spark Streaming is an extension of the core Spark API that enables scalable, high-throughput, fault-tolerant stream processing of live data streams. Data can be ingested from many sources …

Application Development with Spark Connect

With Spark 3.4 and Spark Connect, the development of Spark Client Applications is simplified, and clear extension points and guidelines are provided on how to build Spark Server Libraries, …

Structured Streaming Programming Guide - Spark 4.1.0 …

You will express your streaming computation as standard batch-like query as on a static table, and Spark runs it as an incremental query on the unbounded input table.

From/to pandas and PySpark DataFrames - Apache Spark

Since pandas API on Spark does not target 100% compatibility of both pandas and PySpark, users need to do some workaround to port their pandas and/or PySpark codes or get familiar …